What if one of the most repeated claims about the evolution of hardware was continually misinterpreted? This is the case with Moore’s Law, which is cited continuously but not correctly.

What is Moore’s Law?

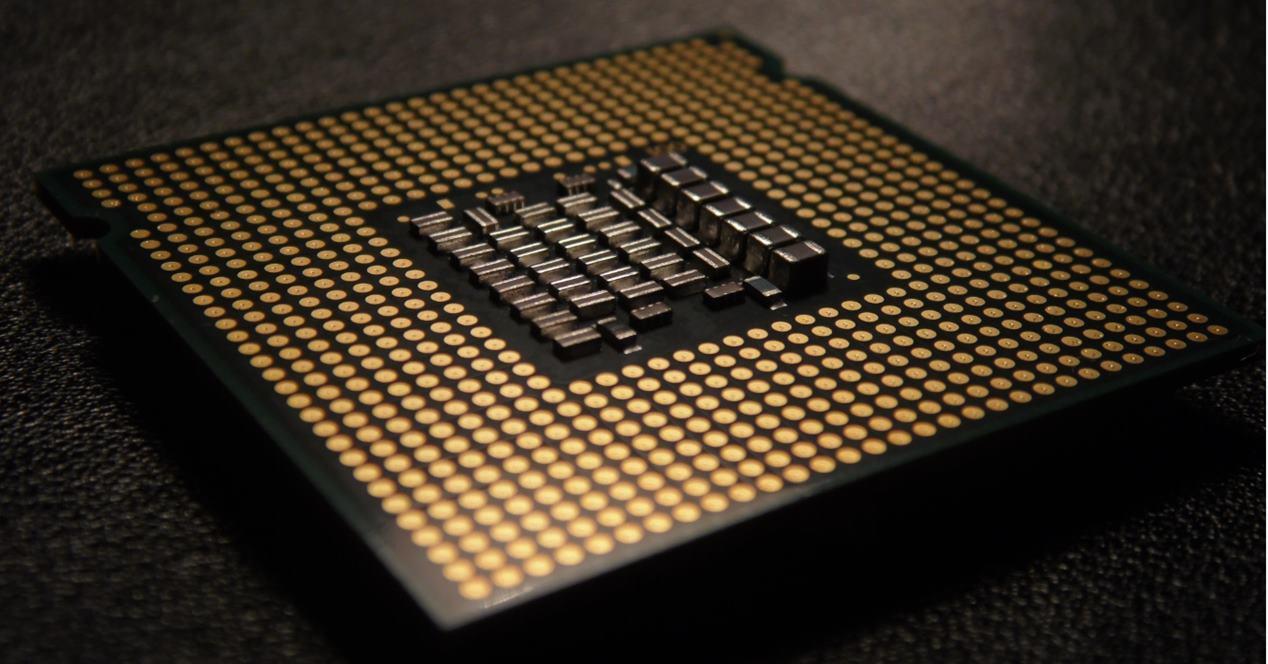

In 1965 Gordon Moore, who was the founder of Intel, predicted that the number of transistors per zone would double each year, a prediction he would revise after two years in 1975. Moore’s statement was not initially released. in the form of a scientific or paper document, but in an article published in Electronics magazine published on April 19, 1965, where it said that in 1975 we were going to have a processor with 65,000 transistors.

In Moore’s own words:

The complexity of the minimum cost of components has doubled per year. In the short term, this trend is likely to continue or increase. In the long run, the growth rate is more uncertain, although there is no reason not to believe that it will remain nearly constant for at least ten years. Which means that in 1975, the number of components (transistors) in an integrated circuit at minimal cost

will be 65,000.

Moore’s law therefore speaks of the density of transistors per zone and not of performance as has been said. Part of the performance came more from the Dennard scaling, which broke down from 65nm. In order not to get lost, Moore’s Law was never about performance, but on the density of transistors at a certain cost.

The way to achieve the size reduction is very simple, regularly the size of the transistors is reduced by 0.7. Since the processors are networks of interconnected transistors, this translates into a reduction of 0.49 and therefore half. However, Moore’s Law has been misinterpreted by eliminating an essential part of its statement.

Why has Moore’s Law been misinterpreted?

In the 60s, there were no processors as we know them now, but the same processor could be built separately by several different chips which, over time, would end up in the same room. Over time, the increase in transistors per zone has led to the gradual integration of the different elements of the processor.

Gordon Moore spoke of “at minimal cost” and it was at this point that there was a lag between the original claim and the development of new technology. Over the past few years, we have been able to see how the cost per zone has increased in each node. And it is that Moore’s Law has a counterpart or a complementary law in the form of Moore’s Second Law or also known as the Law of Rocks.

What does the law of rocks say? Well what chip manufacturing cost doubles every 4 years, which conflicts with Moore’s Law which everyone understands, since they always leave out the minimum cost part, so Moore’s Law is misinterpreted, as it was made from an observation of Moore himself without factoring in manufacturing costs.

The end of the law of rocks

The problem with Rocks ‘Law is that if Moore’s prediction remained correct for a good portion of the time, but Rocks’ Law did not operate at the same speed, the cost per area started to drop. change that began in the mid-90s with very slight effects, but in recent nodes has led to an increase in the cost of processors more than expected.

In other words, at the same cost from one generation to another, processors are more and more expensive, if we take into account the density of transistors, Moore’s law is still valid, but not at minimum cost. Moore’s Law has therefore been misinterpreted and its end is not due to density, but to costs.