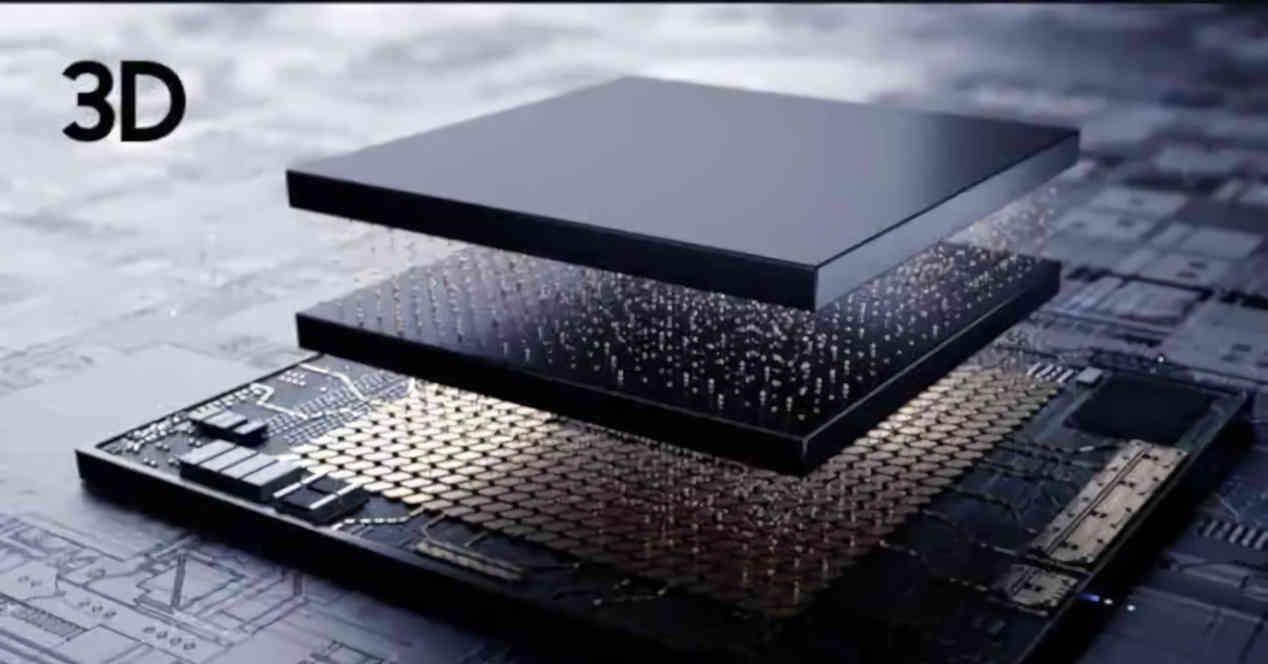

The addition of AMD’s “V-Cache” in some of the future Zen 3-based AMD processors begged the question: does it make sense to expand a processor cache to stratospheric levels in order to gain performance? ? ? Obviously, the idea of applying an additional cache vertically is not something that AMD can do exclusively and vertical interconnect systems between the chips allow a SRAM memory chip to be connected just above an APU. , a CPU or a GPU without problem.

So what AMD has been doing in the background is nothing special, it is true that they were pioneers in applying a 3D SRAM system to expand the total cache memory in CCD Chiplets. , it should be noted that the vertical SRAM as cache will always be the last level cache or LLC of a processor and therefore its implementation will change depending on the type of processor we are talking about.

Vertical cache only at the last level

The reality is that today in general, all processors are SoCs, in the sense that they call upon several processing elements which can be homogeneous in the sense of using all the same or heterogeneous cores at the same time. to have different types. of nuclei. What they have in common is the northbridge, which Intel calls “Uncore” and AMD calls “Data Fabric”. Whatever the name, we are really talking about the same concept.

What does this have to do with vertical masking? Well, the vertical cache by virtue of its position on the chip in an SoC can never be placed before the memory controller in the hierarchy. In Zen 3 CCD chips, there is no memory controller because it is located in the IOD, which is located in another chip, but in a hypothetical APU, the vertical cache would be located above the controller memory, although just earlier in the hierarchy than RAM, but after the higher cache level of the CPU or GPU.

Vertical cache instead of vertical memory

In this assumption, the ideal would be that the RAM is as close as possible to the processor. How about putting full RAM on the processor in a 3D interface? This would not suit us at all due to the high capacity in tens of gigabytes required by current RAM. But how about putting more and more memory stages on the processor? After all, it can be done today.

It sounds like a great idea and it is possible to do it, but it ceases to be so when you consider that with each new memory stage, the number of complete sets between the processor and the memory above. of them decreases. Then, one cannot forget the phenomenon of heat, each new stage obliges to lower the speed of clock at the same time of the memory and the processor. Suddenly, what seemed like a good idea is no longer, we have in our hands a very expensive processor to manufacture, in a few units and with worse performance than with the parts separately.

It is therefore preferable to place a single stage above the processor, since the memory does not have enough capacity to function as RAM memory tod ay, but as a last level cache.

Vertical cache performance on processors

The sole purpose of the cache of each processor is to reduce the CPU access time to RAM memory, but in recent times, transmitting data in the necessary amounts has become a huge energy cost when architects have to find the way to go. reduce the number of accesses in which the CPU or GPU will access its corresponding memory. Why a large top-level cache matters here.

The vertical cache is therefore not intended to increase the processing capacity of a CPU, but its performance. We have to understand that in a system we never reach 100% performance and there are always losses. In the case of a processor, a significant portion of the performance loss is in communication with the memory hierarchy, which includes caches.

The vertical cache being the last level, it will include all the data needed by the previous caches and with its huge size it will reduce the amount of CPU access to RAM, but its efficiency will never be 100% even with a very large cache, the program can request a memory address that has not been copied to the last level cache, forcing the processor to access the RAM assigned to it.

How is the performance of a cache measured?

When the processor looks for a data or an instruction in the hierarchy of the system cache, it starts with those closest to the processor itself, if it finds the data, this is what we call a “hit”, in reference to what the processor has targeted in the cache and hit the target. But if you can’t find the data at that cache level, it’s a failure and you have to look at the lower levels. We call this a “lack” in the sense that the CPU had no purpose in finding the necessary data and / or instructions, during this time the CPU has a shutdown or a “stall”, since the CPU or the GPU it has no data to proceed.

In the case of the vertical cache, since it would not be part of the first cache levels, then its existence cannot prevent the passage of the lower levels of the cache itself. Ya hemos contado que es imposible convert los primeros niveles de caché into a hidden vertical, especial en un mundo en el que los procesadores llevan tiempo siendo multinúcleo y los niveles de caché adicionales sirven para comunicar grupos de núcleos entre sí e incluso de diferente naturaleza in some cases.

Performance on GPUs and other types of processors

In GPUs, we saw the implementation of AMD Infinity Cache, which is a type of large cache that could be moved to the top of the GPU and separated from the main core. At the moment this does not give any significant performance increases, but a vertical cache GPU would have a capacity much higher than the 128MB of the Navi 21 GPU, add this to a more advanced manufacturing node and not being limited in terms of area and amount of last level cache can be up to 512 MB and even 1 GB.

What would be special about a GPU with a 1 GB cache? Regarding Ray Tracing, tests have been carried out and it has been shown that the location close to certain data in relation to the GPU greatly increases their performance when processing scenes with ray tracing. It is therefore possible that future GPUs from NVIDIA, Intel and AMD will implement a vertical cache to increase their performance in scenes with Ray Tracing, which will be more and more common.

Another important market is that of flash memory controllers, which today use DDR4 or LPDDR4 memory chips as a cache. Implementing a vertical cache in these processors would be enough to remove DRAM and make NVMe SSDs cheaper, as the cache will not require the use of DRAM.

Table of Contents