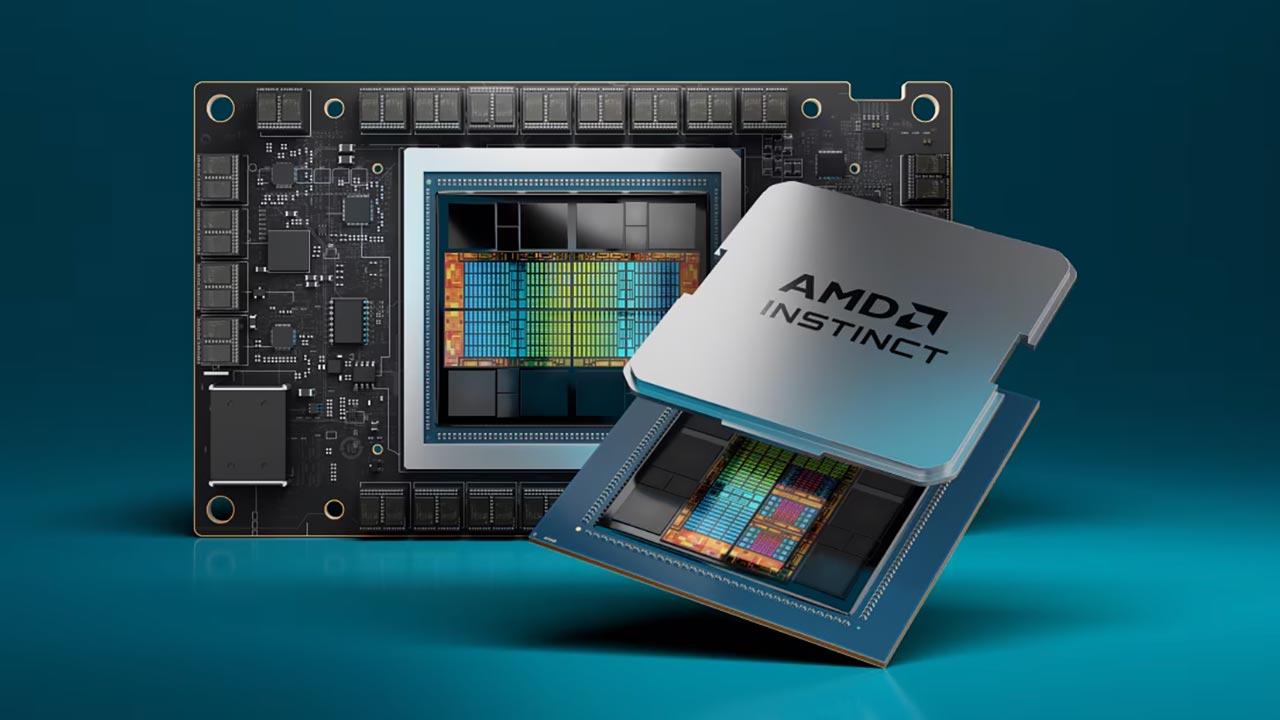

Faced with the development of the heterogeneous Exascale processor, which was the key for AMD to win the El Capitan construction contract, they had to create a new type of interface so that communication between the CPU and the GPU could take place. in an environment where both do share the same memory, this forced AMD to develop a new type of communication interface, which solves a problem that AMD had not yet been able to solve.

Why haven’t we seen a chipset-based GPU yet?

The reason we haven’t seen a dedicated GPU in an MCM that shares memory access with the CPU is because the bandwidth provided by the IOD is not large enough to power a GPU. In the case of an MCM with the unified memory system, we are talking about applying the Infinity cache of the GPU as the system L4.

Why is Infinity Fabric not enough? Well, from the fact that this gives us an interface of 32 or 64 bytes / cycle depending on the version

Now imagine that we want to connect a Navi 21 (RX 6800, RX 6800 XT and RX 6900 XT), we must keep in mind that between the L2 cache inside the GPU and the Infinity Cache we have 16 partitions L2 cache with a bandwidth of 64 bytes / cycle each, or about 1024 bytes / cycle in total and therefore an 8192-bit interface, which requires AMD engineers to develop a communication interface much more complex than the Infinity Fabric in order to to be able to communicate with a GPU using the same memory pool.

The problem with the Infinity Fabric when it comes to communicating multiple chips to each other externally is that, being a horizontal 2D connection, it has a limited number of pins that we can place without significantly increasing the perimeter of the chip. The other option if we want to increase the bandwidth is to increase the clock speed of each of the pins, but that would significantly increase the power consumption, which would lead to a cost overrun.

X3D, the replacement for the Infinity Fabric

When AMD announced the X3D, many thought it was a type of packaging, it really isn’t, but rather a new type of interconnect like the Infinity Fabric., only that it would work vertically on an interposer, in a configuration very similar to that used in chips accompanied by HBM memory.

The idea is to have a communication interface with a power consumption close to 0.2 pJ / bit, which allows a bandwidth ten times higher under the same power consumption as the Inf inity Fabric.

In fact, AMD lags behind Intel

But, the reason behind these technologies is in the development of supercomputers with the ability to achieve the rate of 1 ExaFLOPS

The idea is that an architecture can be so limited by the amount of operations it performs as to the bandwidth that these operations need. Of course, if we significantly increase the processing capacity, we must also do this with memory. But is the memory available to us sufficient to evolve in the processing?

The evolution of RAM has been very monotonous, every time a new manufacturing node appears, which helps reduce power consumption in data transfer, but the speed at which RAM is evolving is not fast enough to make it cope with the challenges faced by processor manufacturers such as Intel and AMD leading them to fix the problem on their own

The need for a new kind of RAM

The problem is that every system has a bandwidth limited by the power consumption assigned to the system, and no matter how much we increase the bandwidth, we reach the point where we cannot increase it further due to the fact that the Memory power consumption is too high, so memories with lower and lower pJ / bit count are continuously developed.

But what consumes the most energy? Communication interfaces that are used to move data, the idea is to achieve a figure of pJ / bit low enough not only to communicate the GPU chips without problems but also to be able to create memories with a high bandwidth without considerably increasing the consumption.

X3D DRAM memory?

If you’ve seen AMD’s packaging roadmap, you might think right off the bat that the stacked memory in the X3D Concept is a type of HBM memory, but it really isn’t.

It is a type of memory created as a replacement for the current HBM2e that AMD developed internally in the development of the EHP for the creation of the “El Capitan” supercomputer. The particularities of this type of memory? We know very little about it, but the little we do know is this:

- It uses the X3D interface to communicate externally, which eliminates the need for AMD to add conversion elements from one type of interface to another.

- AMD would experiment with the use of cooling systems such as Peltier cells on top of this memory, in order to obtain higher clock speeds.

- AMD plans to include accelerators or coprocessors in the logic of this type of memory.

We don’t know if AMD will sell this in the home market in the future, but we will most likely see a scaled-down version of this technology. Will we see AMD branded MCMs in which CPU + Memory + GPU + Accelerators are part of a whole? Who knows, but there is no doubt that AMD will use this technology also nationally, for the creation of new chips.

Are we finally going to see high-end processors and GPUs working together in an MCM? Most likely, because that’s what AMD is aiming for.

Table of Contents